How putting yourself in the mindset of a stressed person might just save lives

Introduction

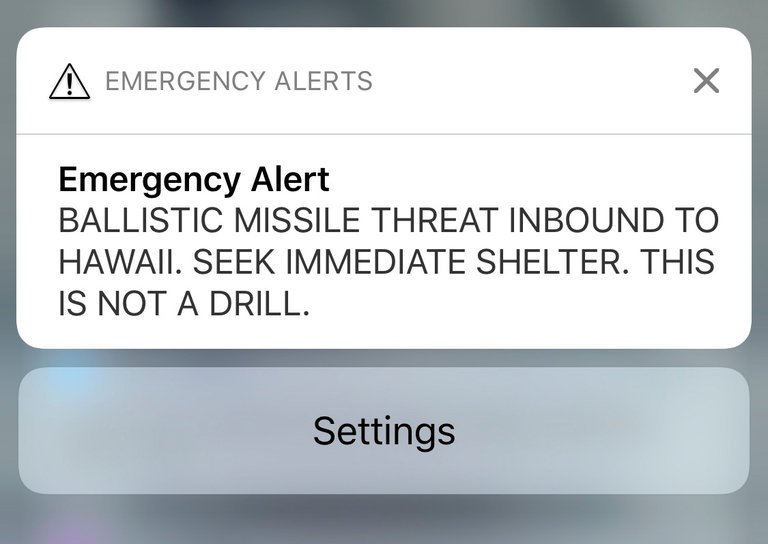

On Saturday 13th January 2018, residents of the US State of Hawaii received an alert on their phones. Instead of a text from their relatives, or the latest football scores, the alert gave the ominous message of “BALLISTIC MISSILE THREAT INBOUND TO HAWAII. SEEK IMMEDIATE SHELTER. THIS IS NOT A DRILL”. In an environment of the realistic threat of nuclear war indicated by the US President’s tweets, alongside the continual goading and threats put out by North Korea on their state television networks, the announcement was met with derision, confusion, and overall, undue widespread panic. It turned out later, following a second message indicating that the first one was a “false alarm”, caused by an employee during a shift change at the Hawaii Emergency Management Agency, who intended to send out a “test” message, instead of a “live” one. This resulted in a public apology from the Governor of the state, and much embarrassment amongst those responsible.

Much has been said in the days that followed the incident about the way in which such a mistake could have been made. Aside from the general panic, it has the extra element of “cry wolf”, where, if the alert is sent out again, those receiving it will now view it with a level of skepticism, instead of immediately acting upon the advice – something which is crucial in the estimated 20 minutes that it would take for a nuclear missile to reach Hawaii from North Korea.

Aside from the social or political elements of this situation, it also raises some questions around the design of the system built to send out these alerts, and how to ensure that users can ensure that the intended drill of “test” or “live” messages are being run. Two luminaries of the design world, Don Norman, author of Design of Everyday Things, and Jared Spool, founder of UIE and the Center Centre have both written insightful pieces on their views of what went wrong, and how it can be prevented in future by the use of better design principles. Writing on Co.Design, Norman’s piece focusses on the fact that we like to blame people, rather than poor design and that the system should have been thoroughly tested before it went live. Spool’s piece on Medium focusses on the fact that the system incorporated poorly chosen file names, as well as poorly designed software.

Both pieces come to a similar, and vital conclusion. When we design and build a system, we should always consider the possibility of what it will be like for someone to use it under stressful conditions. We may not ever come to the point of designing alert mechanisms for incoming nuclear missiles, but we should still consider how easy our webpage, app or system might be to use if the user is pushed by a tight deadline, for example, or is being distracted in some way. Interestingly, my workplace at this very moment, is incurring stress upon my colleagues and I, as work is being done on the roof, and we are frequently subjected to loud bangs, or the sound of hammering and drilling. These interruptions frequently distract us from what we’re doing, causing us to lose focus on where we were with our work, and having to spend time reacquainting ourselves with the task at hand. In this situation, it is common for us to make mistakes as our brains make incorrect assumptions, and we then have to realise those mistakes, and correct them.

So, how do we design for these stressful situations? Thankfully, much of this is already incorporated into the skills we learn in User Experience Design. Giving structure to pages and forms, giving visual dominance to important elements, and clearly labelling and colouring key features can help the brain realise what needs to be done more quickly. We also need to consider what the implications would be if a user made a mistake. How easy would it be to choose the wrong thing? Can an error be easily rectified? Does the system indicate clearly the user’s selections, so that they can go back and fix it later? We can even test these things ourselves – take a prototype or a test version of the system, and go and sit in a busy or noisy environment and ask people to try it. Compare this with people in a quiet, calm place, and see if there is a difference in successful outcomes. The more we trial the system or software, the more sure we can be of its success. We can say that good design and testing can improve revenue or experience, but some day, in the case of the Hawaii Missile Alert, it might just save our lives.